Defensible standards-based web and social media archiving for compliance, eDiscovery and corporate heritage; SaaS and on-premise solutions Hanzo specialize in building cutting edge web archiving technology, since mid-2014 I've worked with them to help build a variety of products and tools to improve their ability to crawl and playback archives as well as giving their customers better insight into what has been captured.

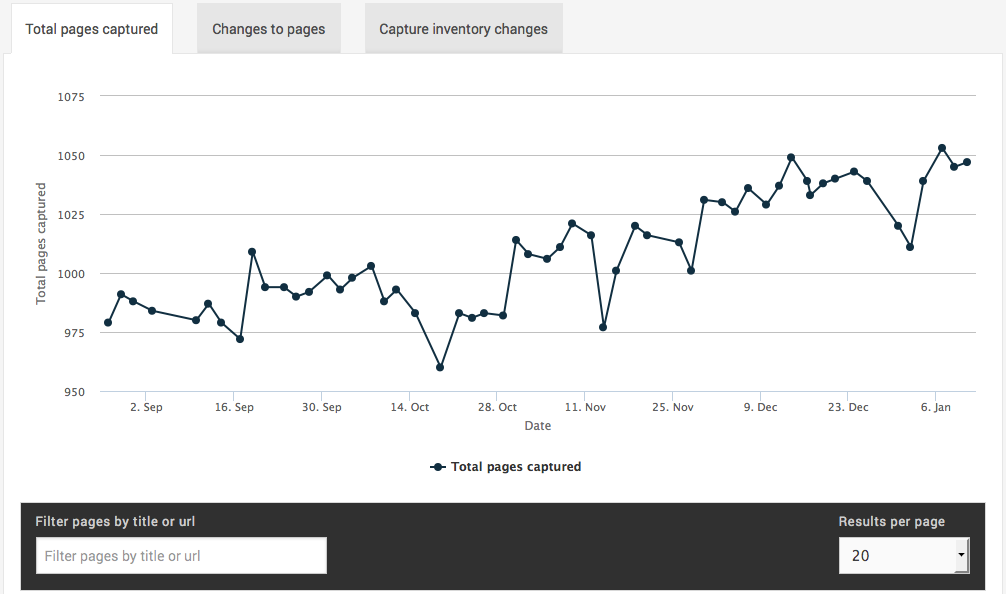

Change Detection

A key market for Hanzo is litigation, where law firms need to able to prove what was or wasn't said by a company or individual at any point in time. A daily capture is a good way to capture this data, but identifying changes would be a long and manual process.

The change detection feature was born out of an R&D project I lead for Hanzo where we'd collect various metrics and try to identify patterns or changes between them. I wrote an initial POC for this mostly using JavaScript and processing a sample of WARCs where we knew there were changes over time.

The key takeaways from the exercise were that we could get quite a long way with word counts, hashing and page counts; while there'd still be a lot of noise, which tends to be the norm in web capture, we could demonstrably detect changes between captures, enough so that it'd be easier for the end-user to find what they were looking for.

Diff Tool

After the change detection project we were able to identify changes between captures, but we needed to be able to display that to the end user. The initial brief was to perform image differentials, which I did try, but had pretty low quality results.

Hanzo already had playback tech that made replaying a capture possible both via URL rewriting and via their custom browser with high precision, streaming the data from S3; it was faster than you'd think, real fast actually. I decided to build out the compare tool by streaming this data through htmldiff before serving it to the user, allowing me to offer up both split and unified differentials. The result was really cool, and working in more cases than you'd think, basically everywhere except certain JavaScript heavy pages like social media would produce a useful differential.

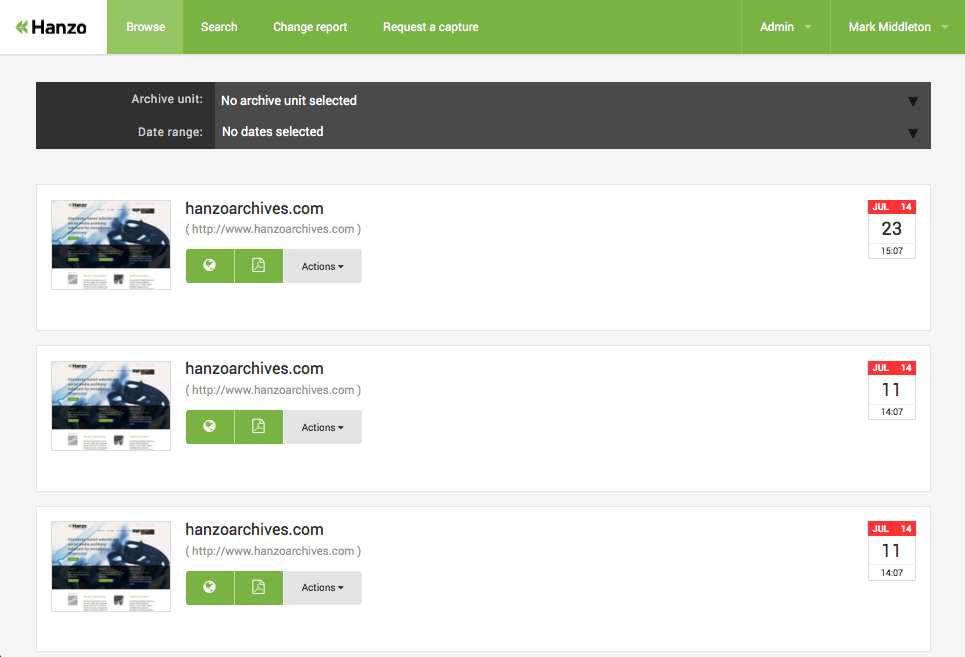

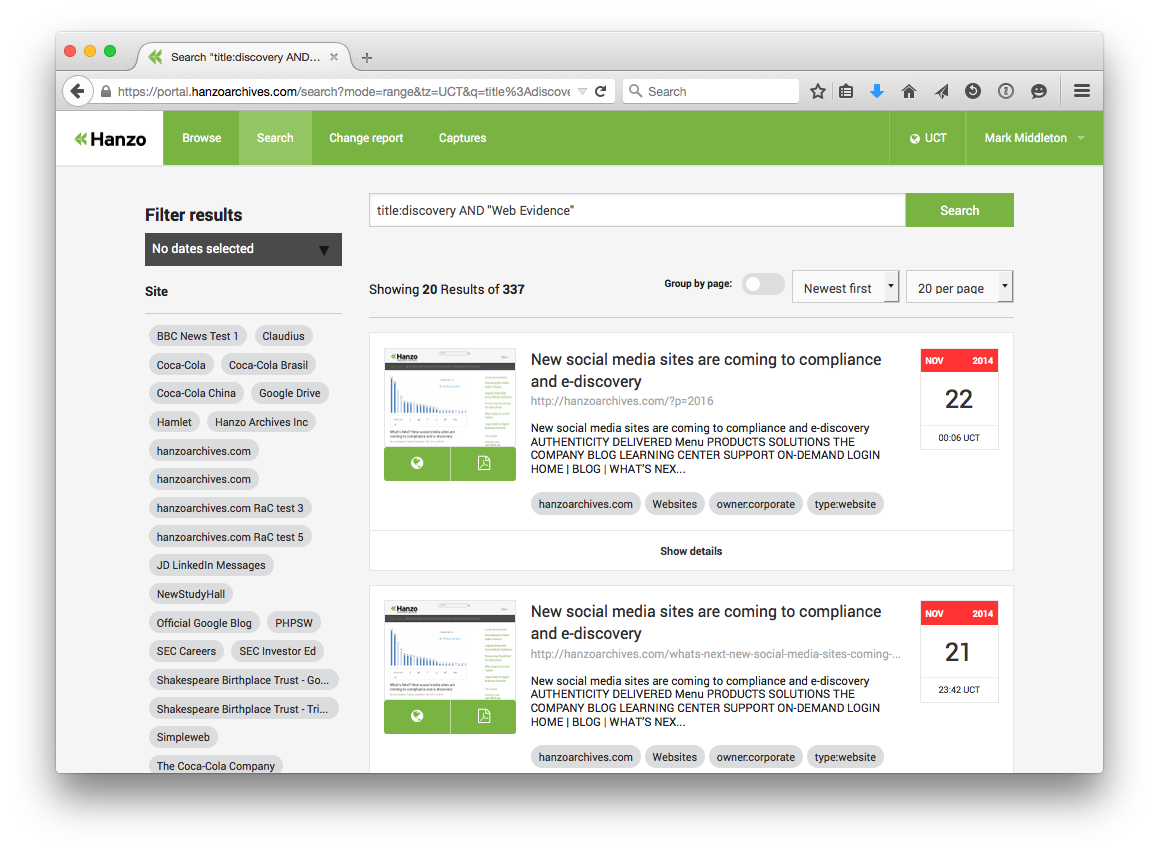

Search

Hanzo populate a Solr index as part of their crawl strategy, we integrated this in a way that would allow users to search across time with pages grouped by URL and ungrouped. Text extraction and tagging meant that finding the captured content you're looking for worked fairly well.

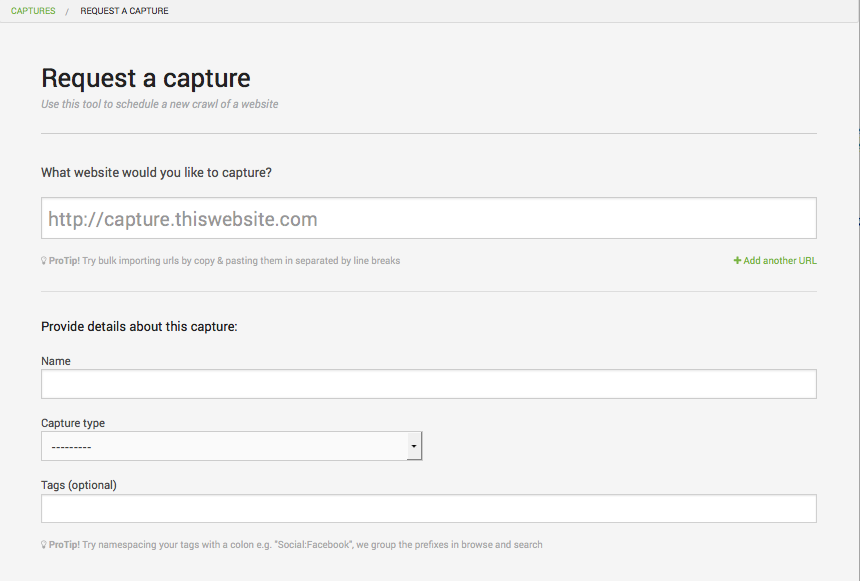

Request a capture

Web archiving is complicated to say the least; Hanzo have a whole team of crawl engineers who specialise in the art of performing a successful web capture, there's lots of knobs and levers which for the most part lived in command line arguments until the web application was born.

Request a capture is a project Hanzo drafted to put these powers in the hands of their end users. Working closely with their CTO and Development Manager I helped them crystallise the options that were available and how we'd present them to end-users in a way that they'd understand.

The result was a seemingly simple form with a lot of power. Users specify the capture type, be that a standard website, or a Facebook or Twitter page, and that would govern which crawl driver is used, and also be able to supply settings for how many hops to go off-domain, or whether to include or exclude certain URLs.

References

Tom Holder, Technical Director at Simpleweb

Steve has an innate technical ability which is given further strength by his level of experience and general desire to do a good job. I have enjoyed working with Steve over and above many others because of his ability to gauge when a problem needs to be solved right and when a problem needs to be solved quickly. He always strives to do an excellent job regardless and makes the right engineering decisions. He learns new technology at an exceptional rate and is often the go to person to solve a technical challenge. As a team player, Steve appears equally happy to integrate with an existing team and role his sleeves up directly working on a project, help mentor less experienced developers, or lead a project as a technical oversight. I have always been happy taking Steve in to customer meetings safe in the knowledge that he will answer questions in a composed and accurate manner whilst not being afraid to give his opinion on technical issues. Steve is well liked and highly respected by his colleagues from all areas of the business. I will miss working with Steve and am confident in recommending him for any technical position.

Mark Williamson, Hanzo CTO

Steve's been a strong contributor to our product software giving excellent advice and guiding our design to ensure a that we give our customers a good user experience. His coding has been top notch and he’s helped manage other team members to ensure that all aspects of the delivery have been what we need.